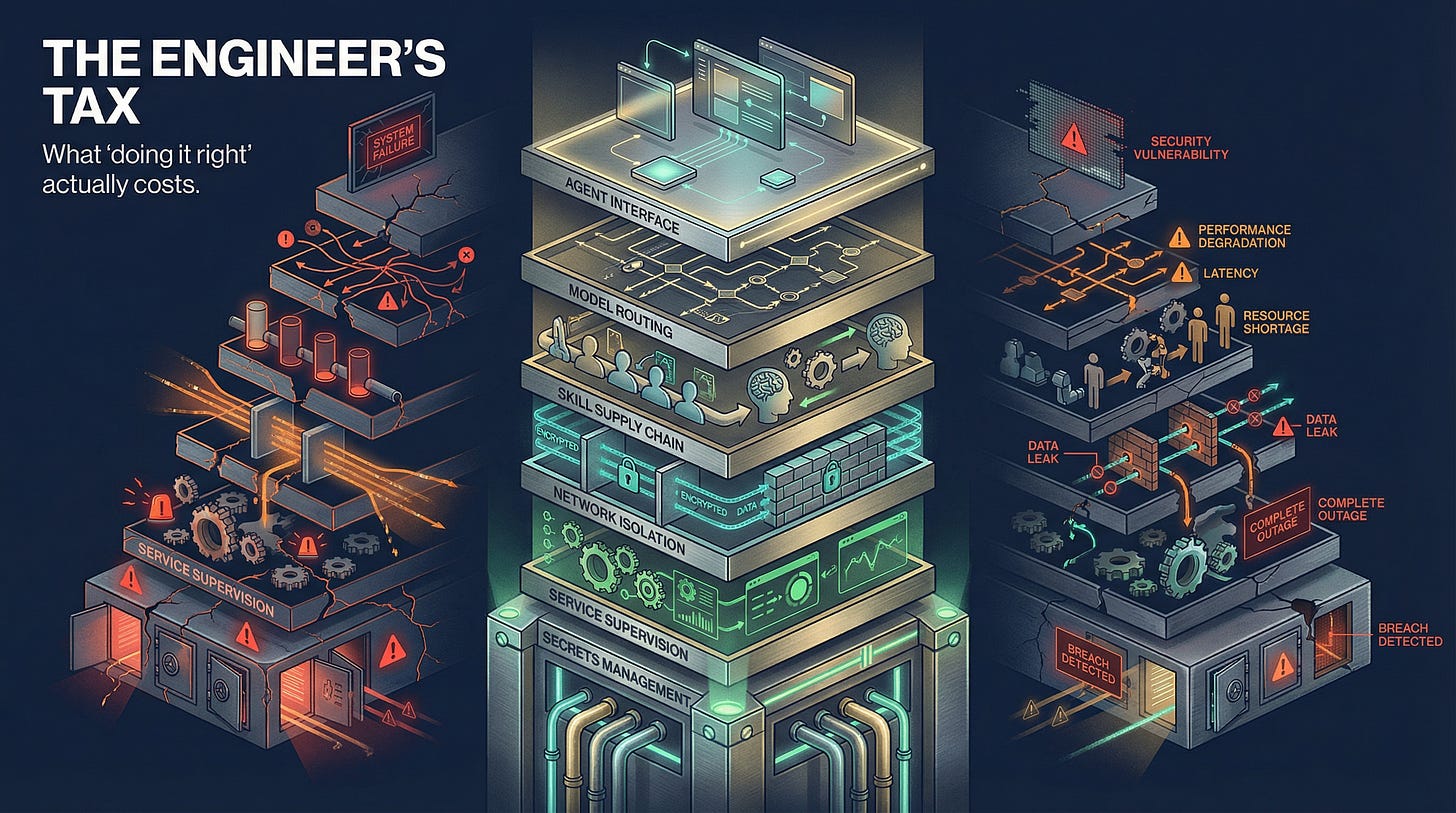

The Engineer's Tax

Every configuration failure I hit standing up OpenClaw in production — and what it tells you about the real cost of the sovereignty play

I want to give you a direct account of what it actually takes to run OpenClaw at production quality, because most coverage either treats it as a simple install or dismisses it entirely as too dangerous. The reality is more instructive than either.

I have 34 years in IT, a CISSP, and active doctoral research in AI systems security. Standing up OpenClaw the right way still took several weeks, surfaced dozens of edge cases, and required building several components that don’t come out of the box. Here is a specific and complete account.

Configuration Surface

OpenClaw’s behavior is governed by a single openclaw.json file that controls everything: which channel plugins initialize, how agents are defined, which models are available, how secrets are accessed, session and concurrency limits, memory plugins, MCP server connections. The configuration surface is enormous and most of it is undocumented or documented incompletely.

Channel plugins don’t start without explicit plugins.allow entries. Slack, Discord, Telegram, and WhatsApp are plugins — not built-in capabilities. None of them initialize without being listed in the plugins.allow array. There is no warning when a plugin fails to load. The gateway starts, appears healthy, and messages to those channels simply produce silence. I found this by reading the process table, not from any log message.

The default model is stickier than the documentation implies. OpenClaw’s built-in OpenRouter integration defaults to Claude Sonnet 4.6. With models.mode: "merge" (the recommended setting), your custom entries are added to the built-in catalog — they don’t replace it. The openrouter/auto fallback silently routes to Sonnet. More importantly, if an earlier session ran a /model command, a modelOverride is written to the session JSON file and it persists across /new and restarts. GitHub issue #55063 documents this as a known bug. The practical consequence: an agent you’ve configured to run on MiniMax or Gemini may be silently running on Sonnet because of a modelOverride from a previous session. You’d only know by checking session state directly.

Implicit main agent fallthrough. If there’s no explicit main entry in agents.list, OpenClaw falls through to the first listed agent’s configuration — including its model, workspace path, and instruction set. No warning, no error. If you’re DM’ing the system and you haven’t defined a main agent, you’re talking to whatever agent happens to be first in your list.

heartbeat: {} is the correct opt-in syntax; heartbeat.enabled crashes the gateway. The gateway throws Unrecognized key: "enabled" and enters a crash loop if you use heartbeat: { enabled: true }. The correct syntax is an empty heartbeat: {} object on each agent entry. Additionally, creating a HEARTBEAT.md in an agent’s workspace is necessary but not sufficient — the config entry is required separately. All agents fire on the main heartbeat schedule regardless of per-agent interval settings (GitHub issue #14986). Per-agent schedules require external cron jobs.

Secrets Management

The path of least resistance in OpenClaw is to put API keys directly in openclaw.json. This is the wrong path for a system that has credentials for every connected service and runs as a persistent background process.

I built secrets resolution around AWS SSM Parameter Store. The pattern: all secrets live in SSM, a deploy script pulls them at startup using openclaw-ssm-resolve, and injects them into the runtime environment before exec’ing the gateway. The gateway has a startup timeout, so resolution has to complete before that timeout triggers. The utility reads JSON from stdin (not command-line arguments):

echo '{"ids":["key/path"]}' | /usr/local/bin/openclaw-ssm-resolve | python3 -c "import sys,json; ..."

Note: aws ssm get-parameter may not work on your instance depending on IAM role scoping and PATH configuration. The openclaw-ssm-resolve utility is more reliable in constrained environments. Test explicitly before you need it.

Service Supervision

The user-level systemd service conflicts with the system-level service. OpenClaw’s beta builds install a openclaw-gateway.service at the user level that auto-starts on login and grabs port 18789 first. This service persists even after systemctl --user disable — it requires systemctl --user mask. I found this when my system-level service consistently failed to bind its port on startup.

The gateway forks and orphans survive systemctl stop. Issuing systemctl stop openclaw.service kills the start script but not the child gateway process. The orphaned process holds port 18789, preventing clean restarts. The fix is a systemd drop-in:

KillMode=mixed

ExecStopPost=/bin/bash -c 'pkill -9 -f "openclaw-gateway"'

KillMode=mixed sends SIGTERM to the main process and SIGKILL to all children. The ExecStopPost handles survivors. Without both, you accumulate orphaned processes you kill manually.

The --delete flag on deploy rsync clobbers runtime state. If you’re using rsync to deploy config updates, rsync --delete removes runtime files OpenClaw needs to persist — session state, memory files, plugin state. Add exclusions for these paths or you’ll lose session state and memory on every deploy.

Model Routing

MiniMax M2.5 and Gemini 3.1 Pro require reasoning: true. Both return 400 Reasoning is mandatory for this endpoint and cannot be disabled without this flag. When this error occurs, OpenClaw falls back silently to openrouter/auto (Sonnet) rather than surfacing the error. If you’re not watching gateway logs, you’ll never know. Add explicit model entries in models.providers.openrouter.models with reasoning: true. Also note that baseUrl must be present in the provider entry or config validation fails with a different error.

The mcpServers config key is beta-only. On stable OpenClaw builds, this key causes the gateway to crash with Unrecognized key: "mcpServers". MCP server configuration on stable requires the openclaw mcp set CLI command. Your deploy script needs to resolve any MCP secrets at deploy time and embed them in the CLI call.

Slack Multi-Agent Configuration

Bots ignore messages from other bots by default. This is the one that stopped cross-agent communication entirely. Adding "allowBots": true to the channels.slack configuration is required for one agent to read messages sent by another. Not mentioned in the primary OpenClaw Slack integration documentation. I found it in a GitHub issue comment.

@mentions require Slack user IDs, not display names. Writing @Forge is plain text — not a functional Slack mention. Agents need <@U0APD5TCT51> format with the actual Slack user ID. Agents need their teammates’ Slack user IDs hardcoded in their system prompts or configuration.

dmPolicy: "pairing" requires re-pairing per agent. With six agents, that’s six separate pairing flows per user. Switching to dmPolicy: "allowlist" with the team user’s Slack ID pre-authorized is much more manageable.

Reaction-based feedback is required for usability outside threads. Default streaming: "partial" only shows typing indicators inside threads. In direct messages, users see no indication the agent received their message. Set ackReaction: "eyes" and typingReaction: "hourglass_flowing_sand" with streaming: "progress".

Skill Supply Chain

The community skills registry was hit by a supply chain attack this year — 800+ malicious skills deployed before detection. Every skill is code that runs on your machine with the permissions your agent has. Those permissions can include file system access, network access, and credentials for every connected service.

My practice: vet every skill before installation, prefer skills with substantial community adoption and activity over new entries regardless of star count, and write custom skills for anything touching sensitive data or credentials.

The Mem0 Plugin

The default LLM configuration in Mem0 references gpt-4-turbo-preview, which was deprecated. Memory capture fails silently with 404 The model 'gpt-4-turbo-preview' does not exist. Your agent simply can’t learn from conversations — the absence of memory doesn’t surface as an error. Fix:

"llm": {

"provider": "openai",

"config": { "model": "gpt-4o-mini" }

}

What All of This Means

Taken together: running OpenClaw at production quality is roughly equivalent to deploying a production web application with a custom service supervision setup, a secrets management pipeline, and several undocumented integration requirements.

That’s not insurmountable for an engineering team. It is substantially more than most non-technical evaluators expect when they read “install OpenClaw.”

The reason I’m describing this in detail is not to discourage adoption. It’s to provide an honest accounting of what the sovereignty play actually costs. The capability available on the other side of this setup is substantial. I’ll describe it in the next post.

But the setup is the prerequisite. For organizations without the infrastructure background to do it correctly, the risk of doing it incorrectly is proportional to the access the agent has. An always-on agent with credentials for every connected system, running skills from a community registry with a documented supply chain vulnerability, on a misconfigured service that doesn’t isolate properly — that’s not a productivity tool. That’s a persistent attack surface.

Do it right or use a managed option. There is no third path.