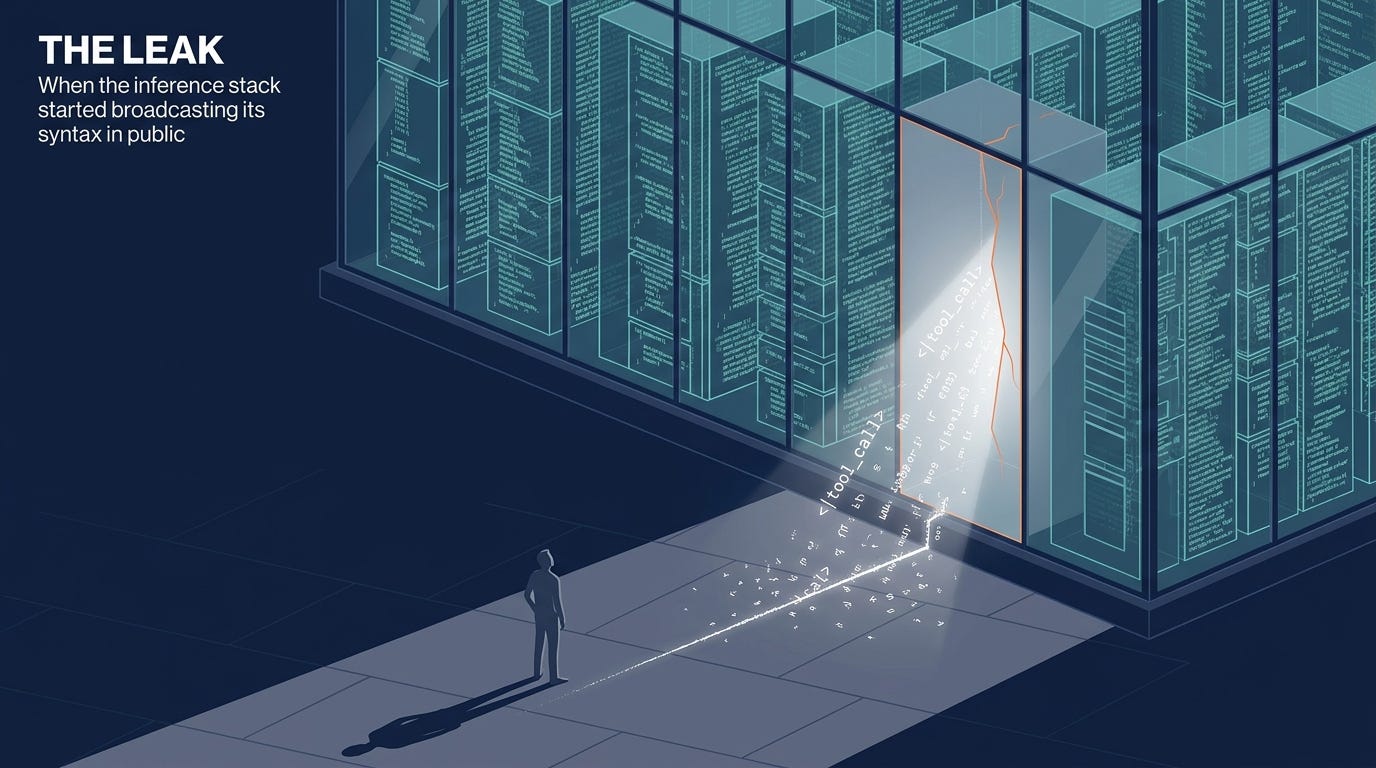

Claude Agent Series: The Leak

How a marketing agent started broadcasting its own tool calls into Slack — and what it took to understand why

I was in bed when it showed up in Slack.

Not an alert. Not a stack trace. A message from Compass — my marketing agent, the one that handles LinkedIn drafts and Blotato staging and Notion updates — that read:

Compass <|tool_call>call:exec{command:<|"|>gh auth switch -u erikdj<|"|>}<tool_call|> (edited)

That’s the raw syntax of a tool call. Not the execution of one — the text of one, posted into a public Slack channel as a message, as if Compass had decided to narrate what she was attempting rather than attempt it.

It was 04:17 UTC on April 22nd. I had been awake for the better part of nineteen hours, debugging an unrelated Slack socket-mode regression. I had just authorized a reboot, deployed a fork of OpenClaw with a cherry-picked fix I believed addressed this exact class of problem, and gone back to monitoring from my phone.

The fork hadn’t fixed it.

What a content-delta leak actually is

A tool call, at the protocol level, travels as a structured object: delta.tool_calls, finish_reason: tool_calls. When a model executes a tool call correctly, that’s what arrives in the stream — structured JSON that the inference layer parses and routes to the appropriate handler.

A content-delta leak is when that structure breaks down. The model emits the tool-call syntax as delta.content with finish_reason: stop. The parser gets plain text instead of a structured payload. Nothing executes. The literal token string — <|tool_call>call:exec{command:…}<tool_call|> — goes wherever content goes. In this case: Slack.

Compass runs on Gemma 4 31B, our self-hosted model on a DGX Spark. Gemma 4 has its own special tokens for tool calls, and they’re distinctive-looking — that <|tool_call> delimiter is Gemma-specific. So when I saw that Slack message, I immediately knew what I was looking at: a content-delta leak. The model was emitting tool call syntax as plain text instead of routing it through the structured tool-call mechanism.

What I did not know: why.

The first hypothesis

I need to be honest about how fast the first hypothesis arrived, and how confident I was in it.

I had spent the previous several hours working with drclaw-Claude — the Claude Code agent running on the EC2 instance that hosts our OpenClaw gateway — on a completely different problem: GitHub App authentication for a fork I’d asked it to build. The work had been extensive. Setting up the keypair, authorizing the DevClawBot app, testing write access by opening and closing a GitHub issue. Long, detailed, context-rich conversations about GitHub auth procedures and gh-app-token workflows.

Compass runs on Mem0 — a long-term memory system that captures context from her interactions. And the specific tool call she had leaked was gh auth switch -u erikdj. My GitHub username. The authentication command from the work we’d been doing.

The story assembled itself: Compass had somehow absorbed our GitHub authentication conversations into her Mem0 long-term memory, mistakenly believed that running gh auth switch was part of her own operational context, and was now attempting it on behalf of tasks that had nothing to do with GitHub — like the brand-voice review Atlas had actually assigned her.

It was a tidy, plausible, internally consistent explanation. Cross-contamination of long-term memory from adjacent agent contexts. The fix was obvious: find and delete the contaminated memories.

I told drclaw-Claude to run the Mem0 wipe.

The wipe

We went to Qdrant directly, since the wrapper script’s timestamp filter was broken. A direct scroll of the memories collection found fifteen recent points. Of those, eleven were explicitly about GitHub auth procedures — gh-app-token workflows, DevClawBot token refresh patterns, gh auth switch steps. Compass had no operational reason to hold any of that knowledge. We deleted all eleven.

At 04:25, the targeted delete succeeded. Eleven contaminated memories, gone.

At 04:25, I also typed the message that would change the direction of the entire investigation.

Pivot

“I think you’re approaching this wrong. Our patched openclaw code should have already fixed this. I just tried Compass again with a new session — no session memory — and I got another tool call message. This is not a memory pollution issue. It’s a model/parser issue.”

Fresh session. No Mem0 state. Same leak.

The contamination theory was falsified in a single test I hadn’t thought to run. The clean session was the counterfactual — if memory poisoning was the cause, a session with no prior memory shouldn’t exhibit the same symptom. It did. The memory was irrelevant.

Here’s what I’d done in the previous three minutes: deleted eleven legitimate memories from a production agent. Those memories documented how Atlas and Forge run gh-app-token — knowledge Compass needed, stored correctly, collected by the system working as designed. I’d destroyed them because they looked contaminated in the context of a theory that was wrong.

The wipe had no effect on the bug. It had real effects on the knowledge state of the fleet.

What the timeline looked like from the outside

Before the leak showed up, drclaw-Claude and I had already been working for nearly twelve hours on a different problem: an OpenClaw socket-mode regression in versions 4.14 through 4.15-beta.2. Seven Slack apps, constant socket churn, events stopping mid-session. We’d traced it to channelStaleEventThresholdMinutes defaulting to 30 minutes and accumulating stale connections across seven bots. Related to upstream issue #67672. Unresolvable in the current versions. Decision: pin back to 2026.3.28.

Then, while I slept, drclaw-Claude built the fork. Cherry-picked commit 71bd9e0 from upstream PR #61956 — a one-line fix in src/agents/openai-ws-message-conversion.ts that patches malformed tool call args being silently replaced with empty objects. A different Gemma bug, but a real one. pnpm install, pnpm build, 1 minute 35 seconds. Version bumped to 2026.3.28-erikdj-gemma-fix.1. Tarball packed. Published to GitHub. DevClawBot authorized.

I’d authorized the reboot at 04:09. The fork went live.

Eight minutes later: the leak.

The fork had been built in confidence that it addressed the class of problem we were looking at. It addressed a different problem from the same class. When Compass leaked at 04:17, drclaw-Claude noted — cautiously, accurately — “5 hits in 4 minutes — higher than yesterday’s rate. The fork didn’t stop the leaks.” That observation was correct and I wasn’t ready to hear it yet. Instead I focused on the specific content of the leak (gh auth switch) and built the contamination theory.

This is the part I want to flag for anyone running agentic systems in production: the path to the wrong diagnosis was paved with correct individual observations. The GitHub auth context in Mem0 was real. The memories looked wrong in isolation. The contamination theory was consistent with all the evidence I had. The problem was that I hadn’t generated the counterfactual — clean session, same prompt — before acting on the theory.

Where we stood at 04:26

Two mistakes in nine minutes.

Mistake one: the Mem0 wipe. Eleven legitimate memories deleted from a production agent based on a theory that hadn’t been tested against the minimal-case counterfactual.

Mistake two (earlier, less visible): the fork, built with high confidence for the wrong bug. This wasn’t fully understood yet. The fork was still running. We still didn’t know where the leak actually lived.

What we did know, after my message at 04:26: the bug was upstream of Compass’s conversation state. It wasn’t a memory issue. It was somewhere between the model and the parser. The fix wasn’t in OpenClaw’s deserialization layer.

We needed to see the wire.

Instrumentation

The next hour was about building the sensor. Not another hypothesis — a measurement tool. drclaw-Claude started probing vLLM directly via curl: tools, streaming mode, prior-leak history in context. Inconclusive. The reproduction was unreliable from the outside. My instinct at 04:28 — “what if we set up additional logging on the vLLM side? We’re kind of guessing” — was exactly right, and it pointed toward the real break in the investigation.

We were guessing. And we’d been guessing wrong.

The wire was the answer. Getting there required access to the machine. Access to the machine required a key. A key required my other Claude.

That’s the next post.

Part of a 5-post series on a 21-hour production debugging session.