All the Claws

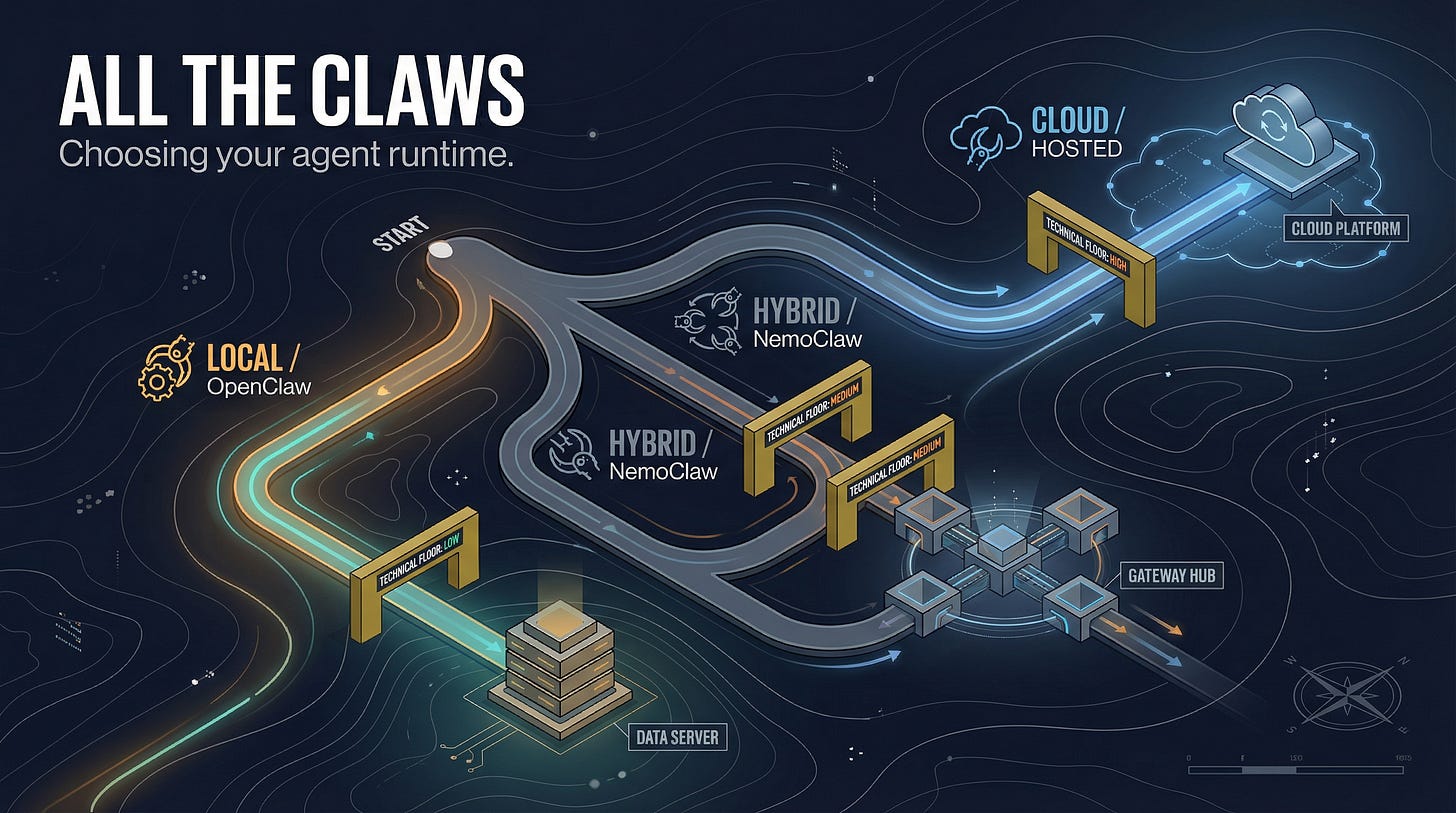

The ecosystem of agent runtimes, why NemoClaw didn't solve the problems I thought it would, and how to actually choose

The naming convention started as a joke and then became the most efficient way to describe a fracturing product category.

Within six weeks of OpenClaw going viral, the open-source ecosystem had spawned over a dozen serious forks. NanoClaw: 700 lines of TypeScript, built the day after OpenClaw’s rebrand, specifically because the original’s 430,000 lines are too large for any human to audit. ZeroClaw: rewrote the whole thing in Rust. Nanobot: 4,000 lines of Python, out of Hong Kong. Each fork attacked a specific weakness of the original — security, performance, auditability, resource footprint. Each gathered thousands of GitHub stars.

Then the enterprises arrived. NVIDIA built NemoClaw, a kernel-level security sandbox around OpenClaw itself. Abacus launched AbacusClaw. A cottage industry of boutique “claw hosting” providers emerged. The “Mac mini craze” — a wave of people buying dedicated Mac minis for local agent infrastructure — became real enough that Perplexity launched a specific product for it.

For someone trying to make an actual adoption decision, the proliferation is noise unless you have a framework for the real differences. Here’s what I found after working through several of them.

The Options, Applied to the Three Axes

OpenClaw (the original) Runs local, on your machine. Model-agnostic — any LLM, any combination. Interface is messaging-native: Slack, Telegram, Discord, WhatsApp, iMessage, Signal.

The strategic position is explicit: maximum sovereignty, maximum flexibility, maximum operational cost. Running OpenClaw correctly requires the same discipline as running a production web application. More on the specifics in the next post.

NemoClaw (NVIDIA) Runs local, inside an OpenShell sandbox with Landlock and seccomp kernel-level isolation. Inference routes through a proxy to OpenRouter (hundreds of models, but through a single bottleneck). Same messaging-native interface as OpenClaw.

The pitch is Red Hat for Linux: take the raw capability and make it safe enough for organizations. The kernel-level sandboxing is real. The implementation has significant gaps I’ll describe in detail.

Perplexity Computer (cloud) Runs in the cloud — sandboxed, no local file access in the standard tier. Multi-model, routing across 19 providers. Web dashboard, iOS, Android, Comet browser. A separate “Personal Computer” product on a dedicated Mac addresses the local execution market.

The delegation play. You describe what you need, you don’t manage the infrastructure. $200/month consumer, $325/seat enterprise.

Anthropic Dispatch Runs local on your desktop — the desktop must stay awake and connected. Single-model: Claude. Phone-to-desktop interface. The “properly done” play. Research preview, ~50% reliability on complex tasks per early testing. The strategic bet is long-term safety reputation in the professional tier.

Meta Manus Cloud plus a local desktop app with permission-gated local file access. Meta’s model stack. Consumer-native, every local action requires explicit user approval. Distribution through three billion Meta users is the moat.

The NemoClaw Experience

I spent meaningful time on NemoClaw because its value proposition was specifically compelling for my security posture. Kernel-level sandboxing via Landlock and seccomp means you can open up OpenClaw’s internal permissions inside the sandbox without exposing the host system. For multi-agent setups where agents need file access and tool execution, this matters.

Here is what I actually encountered.

The configuration file is locked. The openclaw.json inside the sandbox is read-only, with a sha256 hash pinned at build time. No official mechanism to customize it. The configuration controls everything: which channel plugins initialize, which models are available, how agents are configured. GitHub issue #773 in the NemoClaw repository documents this as a known bug, filed March 24, 2026. Still open. The community workaround: docker exec -it <container> bash as root to edit the file directly. Which bypasses the integrity check. Which defeats the entire security model.

The inference routing constraint is architectural. All inference routes through the OpenShell proxy. One active route at a time. In the context of my setup — where I’m running six agents, each on a different model, with a local vLLM instance on my DGX Spark for sensitive data that can’t leave the network — this is a hard limit. The agents that benefit most from model diversity lose that diversity inside NemoClaw.

Hardware requirements are underspecified. Documented minimum: 4 vCPU, 8GB RAM. Actual: the OpenShell container pushes 6GB RSS during sandbox image push. I hit OOM-kills on a 2 vCPU / 7.6GB instance and had to resize to 4 vCPU / 15GB before it stabilized.

Policy preset bugs. GitHub issue #481: Discord/Telegram/Slack preset policies ship without binaries entries in the OPA allowlist. The proxy returns 403 on all HTTPS CONNECT despite the policy appearing to apply. Solvable, but requires reading OPA policy files.

After working through these issues, I abandoned NemoClaw. The security value proposition is real in principle. The implementation is alpha-quality in ways that matter for production use. I stayed on OpenClaw with application-level controls and tighter operational discipline.

The Boutique Hosting Question

The “Mac mini craze” reflects genuine demand: people want local agent capability without the infrastructure complexity of running OpenClaw themselves. A class of boutique hosting providers has emerged to serve that demand.

The honest assessment: security posture in this space varies wildly and is largely unverifiable. When you outsource the infrastructure to a hosting provider, you’re trusting their security practices with an agent that has credentials for everything it’s connected to. For low-stakes use cases, this may be acceptable. For organizations with regulated data, it almost certainly isn’t — not because hosting providers are untrustworthy, but because “trust us” doesn’t satisfy HIPAA, FedRAMP, or SOC 2 audit requirements. You need specific controls, specific evidence, and specific contractual commitments. The boutique hosting market doesn’t have established patterns for any of that yet.

How to Actually Choose

Three questions that cut through the noise.

First: What is your data governance requirement? If any data your agents will process is regulated — PHI, PII subject to GDPR, ITAR-controlled, financial data under GLB — resolve the compliance question before the technology question. The compliance question may determine your deployment model entirely.

Second: What is your technical floor? OpenClaw at production quality requires infrastructure staff. If you don’t have someone who can manage a production Linux service, vet package dependencies, configure secrets management, and respond to a compromise incident, you don’t have the OpenClaw option regardless of how much you want the capability. This isn’t elitism; it’s a straightforward capability requirement.

Third: What do you actually need multi-model routing for? If your use case is a single general-purpose assistant, single-model is fine. If you’re building a specialized agent team where different roles benefit from different model capabilities, you need the model-agnostic path. That currently means OpenClaw. Plan accordingly.

The choice is not primarily a capability question. At the frontier, every option is capable enough for most tasks. The differentiators are security posture, compliance compatibility, operational requirements, and model flexibility. Evaluate on those axes, not the feature list.